The question is no longer whether AI can make software development faster. The better question is: which parts of the development process become faster, and where must speed not come at the cost of quality?

In professional projects, the biggest time savings rarely come from "AI writes code". The larger lever sits before and after coding: clarifying requirements, comparing technical options, preparing tests, finding edge cases, writing documentation and making pull requests easier to review.

Where software projects lose time

Projects often lose time through unclear handoffs rather than typing speed:

- tickets are vague

- acceptance criteria are missing

- architecture decisions are not documented

- tests are written too late

- reviews catch the same issues repeatedly

- documentation remains unfinished

- small technical debt accumulates

AI can help precisely there when it is integrated into the process. It can formulate questions, expose assumptions, compare options and prepare repeatable work.

Acceleration across the development lifecycle

1. Discovery and requirements

AI can structure meeting notes, existing documents and user feedback. The result can be open questions, hypotheses and first user stories. The human decides what is correct. AI reduces the friction before that decision.

Example: a workshop transcript becomes epics, risks, data objects and open questions. That saves preparation time and makes product decisions visible earlier.

2. Architecture and technical options

AI can compare architecture options: monolith or module boundary, REST or GraphQL, custom service or existing platform. It should not make the final decision. It should provide a structured basis for one.

A good output is not "use Next.js". A good output explains criteria: team knowledge, SEO, authentication, operations, data volume, integrations and maintenance.

3. Implementation

During implementation, AI is strongest on repeatable tasks:

- component variants

- forms and validation

- data mapping

- API clients

- test cases

- migration scripts

- build pipeline fixes

Deep domain logic remains a human responsibility. AI can prepare it, but the team owns it.

4. Tests and quality assurance

Tests are one of the strongest AI use cases. An agent can suggest missing test cases, extend existing tests and collect edge cases systematically. This makes quality visible earlier.

Useful test areas include:

- empty inputs

- permission cases

- error states

- network problems

- localization

- date and number formats

- mobile layouts

5. Review and documentation

AI can summarize pull requests, flag risk areas and prepare changelogs. This does not remove reviews. It makes them more focused.

Good review questions are:

- Which files changed unexpectedly?

- Are there new dependencies?

- Are tests missing for critical paths?

- Is behavior backward compatible?

- Are there privacy or security effects?

Speed needs measurement

Companies should not measure AI by "it feels faster". Better metrics are:

| Metric | What it shows |

|---|---|

| Cycle time | How fast work moves from start to merge |

| Review duration | Whether pull requests become clearer and smaller |

| Defect rate | Whether speed creates more bugs |

| Critical-path test coverage | Whether quality is secured earlier |

| Rework | Whether requirements were understood better |

| Documentation quality | Whether knowledge is preserved |

Without measurement, AI can create a demo effect: everyone is impressed, but nobody knows whether the product improved.

Where AI should not shortcut the process

AI should not skip fundamental decisions. Be especially careful with:

- security architecture

- payment flows

- medical or legal workflows

- personal data

- permission models

- contract and pricing logic

Here, AI can prepare, document and test. The decision must remain traceable.

A practical starting point

A good start is not a huge transformation program. A focused four-to-six-week pilot is better:

- Select one team and one project.

- Define three recurring tasks.

- Set rules for data, tools and reviews.

- Measure before and after.

- Turn lessons into standards.

Good pilot areas include internal tools, design-system work, test coverage, refactoring and documentation. Critical core workflows without tests are a poor starting point.

Why strong teams benefit more

AI does not automatically make every team productive. It amplifies existing habits. If requirements are unclear, tests are missing and architecture is undocumented, AI produces inconsistency faster. If a team works cleanly, AI becomes a multiplier.

That is why AI adoption is also process work: better tickets, better reviews, better tests and better decision records.

Conclusion: AI accelerates learning, not only coding

The largest benefit of AI in software development is not that code appears faster. The largest benefit is that teams understand, verify and improve faster.

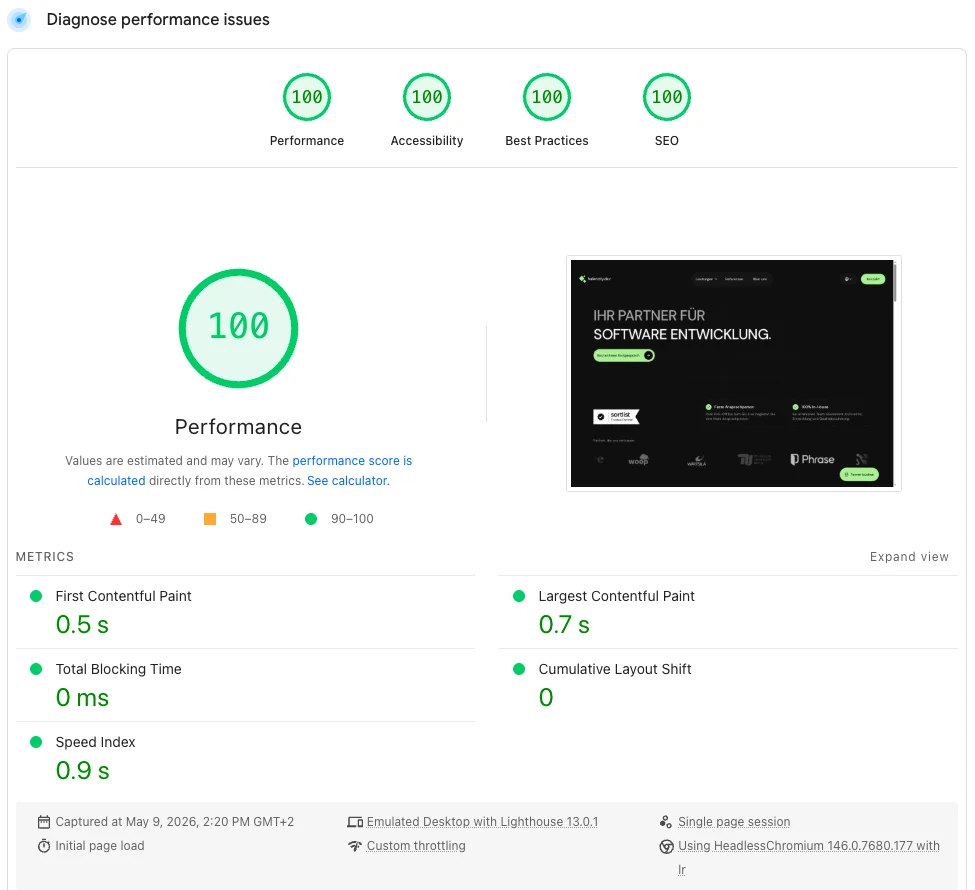

At hafencity.dev, we combine AI-supported development with clear architecture, review processes and measurable quality. This makes web app development, backend development and AI integration faster without losing control.