AI coding has become part of modern software development. Tools such as OpenAI Codex and Anthropic Claude Code are changing more than code generation. They change how teams break down work, document decisions, build tests and prepare pull requests.

The important point: AI does not replace an engineering system. It amplifies one. A team with clear architecture, good documentation and reliable tests benefits far more than a team that gives vague requirements to a chat window and hopes a product appears.

This article explains how companies can use AI coding professionally: with Codex, Claude, repository rules, reviews and a workflow that combines speed with quality.

What Codex and Claude Code can do

OpenAI describes Codex as a coding agent that can read, modify and run code. In practice, this is useful for well-scoped tasks: bug fixes, refactoring, tests, documentation, migrations and pull request preparation.

Claude Code is relevant for similar workflows, especially where an agent works inside project context, edits files, runs shell commands and reasons through larger codebases. Neither tool is a magic autopilot. Both are productive when they get enough context and clear boundaries.

| Task | Good AI usage | Human responsibility |

|---|---|---|

| Feature scope | Structure acceptance criteria | Make the product decision |

| Implementation | Boilerplate, tests, API wiring | Check architecture and edge cases |

| Refactoring | Reduce duplication, strengthen types | Preserve behavior and compatibility |

| Review | Flag risks and missing tests | Decide whether to merge |

| Documentation | README, changelog, migration notes | Approve factual correctness |

The prompt is not the main thing

Prompts matter, but they are not the core of professional AI coding. Repository structure, testability, local conventions and a clear task frame matter more.

A good task for Codex or Claude includes:

- Goal: what should change for users or developers?

- Context: which files, APIs and decisions matter?

- Boundaries: what must not be changed?

- Quality criteria: which tests, lint rules or screenshots count?

- Review hints: what should the human check closely?

"Build the feature" is a poor task. "Add server-side phone validation to the contact form, do not change email templates, add tests for empty and international numbers, and document the error message" is a usable task.

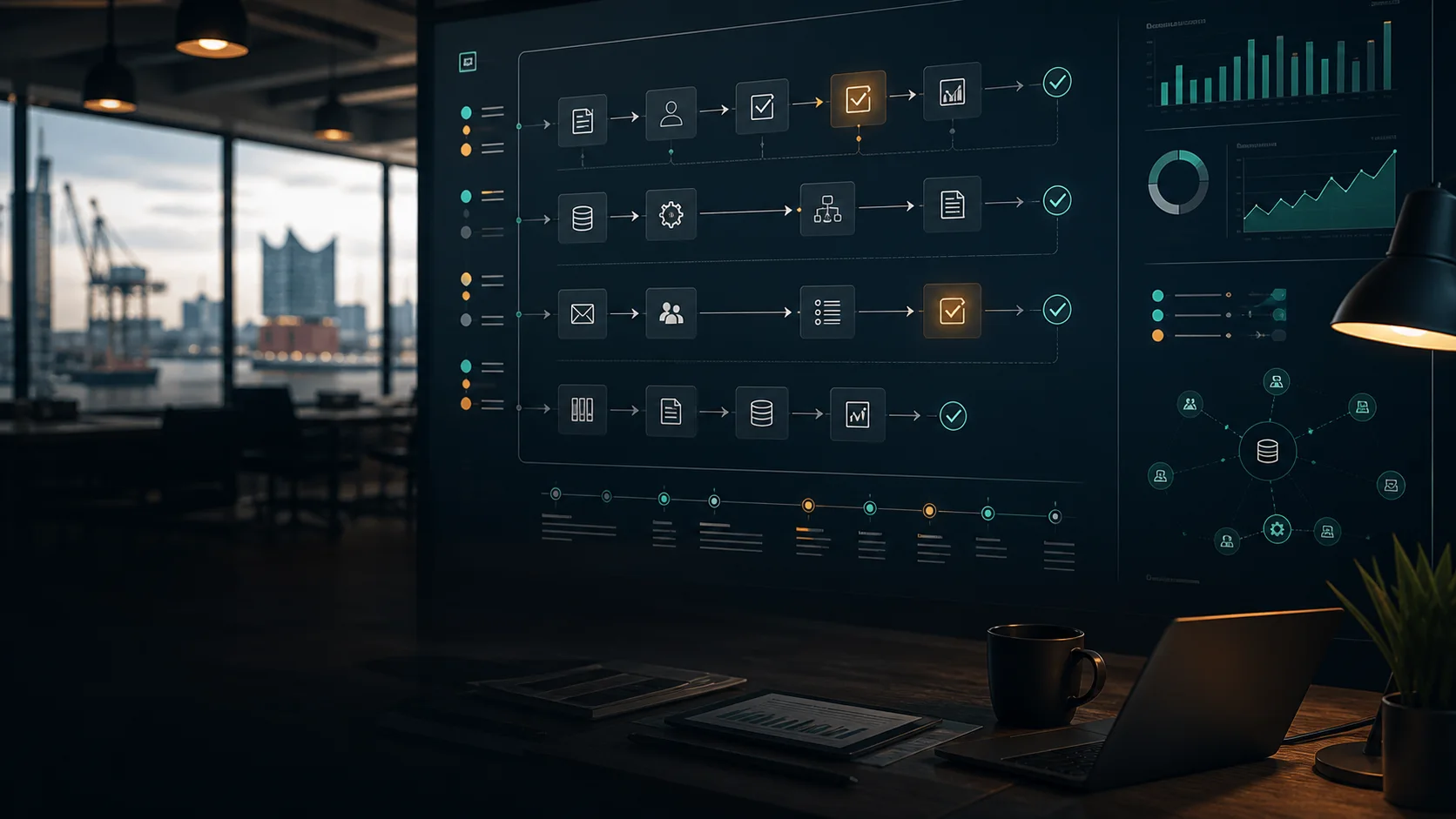

A professional AI coding workflow

AI coding works best as a structured loop.

1. Prepare the task

Before an agent starts, sharpen the goal: expected behavior, non-goals, affected components and test strategy. This saves more time than fixing vague prompts later.

2. Let the agent work in isolation

The agent should work in a branch, workspace or clearly bounded area. For larger tasks, split responsibilities: one agent for tests, one for UI, one for backend behavior.

3. Verify mechanically

Lint, type checks, unit tests, end-to-end tests and visual checks should run. AI-generated code is not done because it looks plausible.

4. Human review

The human review shifts from inspecting every line to checking architecture, side effects, security boundaries, product logic, data model and maintainability.

5. Document what was learned

If an agent repeats the same mistake, put the lesson into local rules, README files, tests or agent instructions. The system improves with each task.

Codex or Claude: Do teams need to choose?

Not necessarily. Many teams will use several AI coding tools because surfaces and strengths differ. The tool brand matters less than governance.

Companies should ask:

- Which repositories and data may the tool access?

- How are secrets protected?

- Are changes traceable?

- Is approval required before production actions?

- Does the tool fit Git, CI and project management?

- Can results be verified with tests?

A fast tool that is hard to control creates risk. A tool that fits review, testing and documentation may be more productive even when it feels less spectacular.

Common AI coding mistakes

The first mistake is too much trust. AI can produce confident but wrong explanations, misremember APIs or miss edge cases. Relevant output needs verification.

The second mistake is too little context. If agents do not know architectural decisions, they invent new patterns and create inconsistency.

The third mistake is weak test culture. Without tests, AI mostly increases the amount of code, not the quality.

The fourth mistake is using prototype-style "vibe coding" in production systems. Free exploration can be useful for prototypes. Customer portals, payment flows, medical workflows and internal core systems need engineering discipline.

Where AI coding creates immediate value

AI coding is strongest on tasks that are easy to verify:

- adding tests for existing behavior

- strengthening TypeScript types

- improving form validation

- updating API clients

- writing migration notes

- completing small UI states

- fixing imports and build errors

- deriving documentation from code

Tasks with unclear product strategy, deep domain logic or high security impact are harder. AI can prepare them, but it should not make the final decision.

What companies need to change

AI coding is not only a developer tool. It changes planning and estimation. Tasks can become smaller. Prototypes appear faster. Reviews become more important. Documentation becomes part of the workflow.

That also means project managers, product owners and tech leads must define quality more clearly. Vague tickets do not become better with AI. They are just implemented faster.

Conclusion: AI coding is a process advantage

Codex, Claude Code and similar tools do not replace experienced developers. They help teams that know what they want to build and how quality will be measured.

At hafencity.dev, we use AI coding where it improves the development process: analysis, implementation, tests, refactoring, documentation and review preparation. For companies, the largest value appears when this way of working becomes part of a clear AI strategy and professional software development.